As an example, if you have `single` as your preferred style, we'll now allow this:

```py

assert s.to_python(123) == (

"123 info=SerializationInfo(include=None, exclude=None, mode='python', by_alias=True, exclude_unset=False, "

"exclude_defaults=False, exclude_none=False, round_trip=False)"

)

```

Previously, the second line of the implicit string concatenation would be flagged as invalid, despite the _first_ line requiring double quotes. (Note that we'll accept either single or double quotes for that second line.)

Mechanically, this required that we process sequences of `Tok::String` rather than a single `Tok::String` at a time. Prior to iterating over the strings in the sequence, we check if any of them require the non-preferred quote style; if so, we let _any_ of them use it.

Closes#2400.

In order to avoid confusing new developers. When a debug build panics

chances are that the panic is caused by local changes and should in

fact not be reported on GitHub.

RuleSelector implemented PartialOrd & Ord because ruff::flake8_to_ruff

was using RuleSelector within a BTreeSet (which requires contained

elements to implement Ord). There however is no inherent order to

rule selectors, so PartialOrd & Ord should not be implemented.

This commit changes BTreeSet<RuleSelector> to HashSet<RuleSelector>

and adds an explicit sort calls based on the serialized strings,

letting us drop the PartialOrd & Ord impls in favor of a Hash impl.

This is a followup to #2361. The isort check still had an issue in a rather specific case: files with a multiline import, indented with tabs, and not containing any indented blocks.

The root cause is this: [`Stylist`'s indentation detection](ad8693e3de/src/source_code/stylist.rs (L163-L172)) works by finding `Indent` tokens to determine the type of indentation used by a file. This works for indented code blocks (loops/classes/functions/etc) but does not work for multiline values, so falls back to 4 spaces if the file doesn't contain code blocks.

I considered a few possible solutions:

1. Fix `detect_indentation` to avoid tokenizing and instead use some other heuristic to determine indentation. This would have the benefit of working in other places where this is potentially an issue, but would still fail if the file doesn't contain any indentation at all, and would need to fall back to option 2 anyways.

2. Add an option for specifying the default indentation in Ruff's config. I think this would confusing, since it wouldn't affect the detection behavior and only operate as a fallback, has no other current application and would probably end up being overloaded for other things.

3. Relax the isort check by comparing the expected and actual code's lexed tokens. This would require an additional lexing step.

4. Relax the isort check by comparing the expected and actual code modulo whitespace at the start of lines.

This PR does approach 4, which in addition to being the simplest option, has the (expected, although I didn't benchmark) added benefit of improved performance, since the check no longer needs to do two allocations for the two `dedent` calls. I also believe that the check is still correct enough for all practical purposes.

This is another temporary fix for the problem described in #2289 and #2292. Rather than merely warning, we now disable the incompatible rules (in addition to the warning). I actually think this is quite a reasonable solution, but we can revisit later. I just can't bring myself to ship another release with autofix broken-by-default 😂

If `allow-multiline = false` is set, then if the user enables `explicit-string-concatenation` (`ISC003`), there's no way for them to create valid multiline strings. This PR notes that they should turn off `ISC003`.

Closes#2362.

We now only trigger `logging-exc-info` and `logging-redundant-exc-info` when in an exception handler, with an `exc_info` that isn't `true` or `sys.exc_info()`.

Closes#2356.

Ruff allows rules to be enabled with `select` and disabled with

`ignore`, where the more specific rule selector takes precedence,

for example:

`--select ALL --ignore E501` selects all rules except E501

`--ignore ALL --select E501` selects only E501

(If both selectors have the same specificity ignore selectors

take precedence.)

Ruff always had two quirks:

* If `pyproject.toml` specified `ignore = ["E501"]` then you could

previously not override that with `--select E501` on the command-line

(since the resolution didn't take into account that the select was

specified after the ignore).

* If `pyproject.toml` specified `select = ["E501"]` then you could

previously not override that with `--ignore E` on the command-line

(since the resolution didn't take into account that the ignore was

specified after the select).

Since d067efe265 (#1245)

`extend-select` and `extend-ignore` always override

`select` and `ignore` and are applied iteratively in pairs,

which introduced another quirk:

* If some `pyproject.toml` file specified `extend-select`

or `extend-ignore`, `select` and `ignore` became pretty much

unreliable after that with no way of resetting that.

This commit fixes all of these quirks by making later configuration

sources take precedence over earlier configuration sources.

While this is a breaking change, we expect most ruff configuration

files to not rely on the previous unintutive behavior.

Previously we tested the resolve_codes helper function directly.

Since we want to rewrite our resolution logic in the next commit,

this commit changes the tests to test the more high-level From impl.

This PR fixes two related issues with using isort on files using tabs for indentation:

- Multiline imports are never considered correctly formatted, since the comparison with the generated code will always fail.

- Using autofix generates code that can have mixed indentation in the same line, for imports that are within nested blocks.

Previously Linter::parse_code("E401") returned

(Linter::Pycodestyle, "401") ... after this commit it returns

(Linter::Pycodestyle, "E401") instead, which is important

for the future implementation of the many-to-many mapping.

(The second value of the tuple isn't used currently.)

Fairly mechanical. Did a few of the simple cases manually to make sure things were working, and I think the rest will be easily achievable via a quick `fastmod` command.

ref #1871

I think we've never run into this case, since it's rare to import `*` from a module _and_ import some other member explicitly. But we were deviating from `isort` by placing the `*` after other members, rather than up-top.

Closes#2318.

`RUF100` does not take into account a rule ignored for a file via a `per-file-ignores` configuration. To see this, try the following pyproject.toml:

```toml

[tool.ruff.per-file-ignores]

"test.py" = ["F401"]

```

and this test.py file:

```python

import itertools # noqa: F401

```

Running `ruff --extend-select RUF100 test.py`, we should expect to get this error:

```

test.py:1:19: RUF100 Unused `noqa` directive (unused: `F401`)

```

The issue is that the per-file-ignores diagnostics are filtered out after the noqa checks, rather than before.

Fixes a regression introduced in eda2be6350 (but not yet released to users). (`-v` is a real flag, but it's an alias for `--verbose`, not `--version`.)

Closes#2299.

We probably want to introduce multiple explain subcommands and

overloading `explain` to explain it all seems like a bad idea.

We may want to introduce a subcommand to explain config options and

config options may end up having the same name as their rules, e.g. the

current `banned-api` is both a rule name (although not yet exposed to

the user) and a config option.

The idea is:

* `ruff rule` lists all rules supported by ruff

* `ruff rule <code>` explains a specific rule

* `ruff linter` lists all linters supported by ruff

* `ruff linter <name>` lists all rules/options supported by a specific linter

(After this commit only the 2nd case is implemented.)

This commit greatly simplifies the implementation of the CLI,

as well as the user expierence (since --help no longer lists all

options even though many of them are in fact incompatible).

To preserve backwards-compatability as much as possible aliases have

been added for the new subcommands, so for example the following two

commands are equivalent:

ruff explain E402 --format json

ruff --explain E402 --format json

However for this to work the legacy-format double-dash command has to

come first, i.e. the following no longer works:

ruff --format json --explain E402

Since ruff previously had an implicitly default subcommand,

this is preserved for backwards compatibility, i.e. the following two

commands are equivalent:

ruff .

ruff check .

Previously ruff didn't complain about several argument combinations that

should have never been allowed, e.g:

ruff --explain RUF001 --line-length 33

previously worked but now rightfully fails since the explain command

doesn't support a `--line-length` option.

I presume the reasoning for not including clippy in `pre-commit` was that it passes all files. This can be turned off with `pass_filenames`, in which case it only runs once.

`cargo +nightly dev generate-all` is also added (when excluding `target` is does not give false positives).

(The overhead of these commands is not much when the build is there. People can always choose to run only certain hooks with `pre-commit run [hook] --all-files`)

Accessed attributes that are Python constants should be considered for yoda-conditions

```py

## Error

JediOrder.YODA == age # SIM300

## OK

age == JediOrder.YODA

```

~~PS: This PR will fail CI, as the `main` branch currently failing.~~

SIM300 currently doesn't take Python constants into account when looking for Yoda conditions, this PR fixes that behavior.

```python

# Errors

YODA == age # SIM300

YODA > age # SIM300

YODA >= age # SIM300

# OK

age == YODA

age < YODA

age <= YODA

```

Ref: <https://github.com/home-assistant/core/pull/86793>

This isn't super consistent with some other rules, but... if you have a lone body, with a `pass`, followed by a comment, it's probably surprising if it gets removed. Let's retain the comment.

Closes#2231.

Fixes a regression introduced in 4e4643aa5d.

We want the longest prefixes to be checked first so we of course

have to reverse the sorting when sorting by prefix length.

Fixes#2210.

`ruff --help` previously listed 37 options in no particular order

(with niche options like --isolated being listed before before essential

options such as --select). This commit remedies that and additionally

groups the options by making use of the Clap help_heading feature.

Note that while the source code has previously also referred to

--add-noqa, --show-settings, and --show-files as "subcommands"

this commit intentionally does not list them under the new

Subcommands section since contrary to --explain and --clean

combining them with most of the other options makes sense.

These were split into per-project licenses in #1648, but I don't like that they're no longer included in the distribution (due to current limitations in the `pyproject.toml` spec).

After this change:

```shell

> time cargo run -- -n $(find ../django -type f -name '*.py')`

8.85s user 0.20s system 498% cpu 1.814 total

> time cargo run -- -n ../django

8.95s user 0.23s system 507% cpu 1.811 total

```

I also verified that we only hit the creation path once via some manual logging.

Closes#2154.

We already enforced pedantic clippy lints via the

following command in .github/workflows/ci.yaml:

cargo clippy --workspace --all-targets --all-features -- -D warnings -W clippy::pedantic

Additionally adding #![warn(clippy::pedantic)] to all main.rs and lib.rs

has the benefit that violations of pedantic clippy lints are also

reported when just running `cargo clippy` without any arguments and

are thereby also picked up by LSP[1] servers such as rust-analyzer[2].

However for rust-analyzer to run clippy you'll have to configure:

"rust-analyzer.check.command": "clippy",

in your editor.[3]

[1]: https://microsoft.github.io/language-server-protocol/

[2]: https://rust-analyzer.github.io/

[3]: https://rust-analyzer.github.io/manual.html#configuration

From discussion on https://github.com/charliermarsh/ruff/pull/2123

I didn't originally have a helpers file so I put the function in both

places but now that a helpers file exists it seems logical for it to be

there.

This commit removes rule redirects such as ("U" -> "UP") from the

RuleCodePrefix enum because they complicated the generation of that enum

(which we want to change to be prefix-agnostic in the future).

To preserve backwards compatibility redirects are now resolved

before the strum-generated RuleCodePrefix::from_str is invoked.

This change also brings two other advantages:

* Redirects are now only defined once

(previously they had to be defined twice:

once in ruff_macros/src/rule_code_prefix.rs

and a second time in src/registry.rs).

* The deprecated redirects will no longer be suggested in IDE

autocompletion within pyproject.toml since they are now no

longer part of the ruff.schema.json.

Yet another refactor to let us implement the many-to-many mapping

between codes and rules in a prefix-agnostic way.

We want to break up the RuleCodePrefix[1] enum into smaller enums.

To facilitate that this commit introduces a new wrapping type around

RuleCodePrefix so that we can start breaking it apart.

[1]: Actually `RuleCodePrefix` is the previous name of the autogenerated

enum ... I renamed it in b19258a243 to

RuleSelector since `ALL` isn't a prefix. This commit now renames it back

but only because the new `RuleSelector` wrapper type, introduced in this

commit, will let us move the `ALL` variant from `RuleCodePrefix` to

`RuleSelector` in the next commit.

At present, `ISC001` and `ISC002` flag concatenations like the following:

```py

"a" "b" # ISC001

"a" \

"b" # ISC002

```

However, multiline concatenations are allowed.

This PR adds a setting:

```toml

[tool.ruff.flake8-implicit-str-concat]

allow-multiline = false

```

Which extends `ISC002` to _also_ flag multiline concatenations, like:

```py

(

"a" # ISC002

"b"

)

```

Note that this is backwards compatible, as `allow-multiline` defaults to `true`.

Ruff supports more than `known-first-party`, `known-third-party`, `extra-standard-library`, and `src` nowadays.

Not sure if this is the best wording. Suggestions welcome!

Extend test fixture to verify the targeting.

Includes two "attribute docstrings" which per PEP 257 are not recognized by the Python bytecode compiler or available as runtime object attributes. They are not available for us either at time of writing, but include them for completeness anyway in case they one day are.

To enable ruff_dev to automatically generate the rule Markdown tables in

the README the ruff library contained the following function:

Linter::codes() -> Vec<RuleSelector>

which was slightly changed to `fn prefixes(&self) -> Prefixes` in

9dc66b5a65 to enable ruff_dev to split

up the Markdown tables for linters that have multiple prefixes

(pycodestyle has E & W, Pylint has PLC, PLE, PLR & PLW).

The definition of this method was however largely redundant with the

#[prefix] macro attributes in the Linter enum, which are used to

derive the Linter::parse_code function, used by the --explain command.

This commit removes the redundant Linter::prefixes by introducing a

same-named method with a different signature to the RuleNamespace trait:

fn prefixes(&self) -> &'static [&'static str];

As well as implementing IntoIterator<Rule> for &Linter. We extend the

extisting RuleNamespace proc macro to automatically derive both

implementations from the Linter enum definition.

To support the previously mentioned Markdown table splitting we

introduce a very simple hand-written method to the Linter impl:

fn categories(&self) -> Option<&'static [LinterCategory]>;

ParseCode was a fitting name since the trait only contained a single

parse_code method ... since we now however want to introduce an

additional `prefixes` method RuleNamespace is more fitting.

Using Ident as the key type is inconvenient since creating an Ident

requires the specification of a Span, which isn't actually used by

the Hash implementation of Ident.

If a file doesn't have a `package`, then it must both be in a directory that lacks an `__init__.py`, and a directory that _isn't_ marked as a namespace package.

Closes#2075.

- optional `prefix` argument for `add_plugin.py`

- rules directory instead of `rules.rs`

- pathlib syntax

- fix test case where code was added instead of name

Example:

```

python scripts/add_plugin.py --url https://pypi.org/project/example/1.0.0/ example --prefix EXA

python scripts/add_rule.py --name SecondRule --code EXA002 --linter example

python scripts/add_rule.py --name FirstRule --code EXA001 --linter example

python scripts/add_rule.py --name ThirdRule --code EXA003 --linter example

```

Note that it breaks compatibility with 'old style' plugins (generation works fine, but namespaces need to be changed):

```

python scripts/add_rule.py --name DoTheThing --code PLC999 --linter pylint

```

## Summary

The problem: given a (row, column) number (e.g., for a token in the AST), we need to be able to map it to a precise byte index in the source code. A while ago, we moved to `ropey` for this, since it was faster in practice (mostly, I think, because it's able to defer indexing). However, at some threshold of accesses, it becomes faster to index the string in advance, as we're doing here.

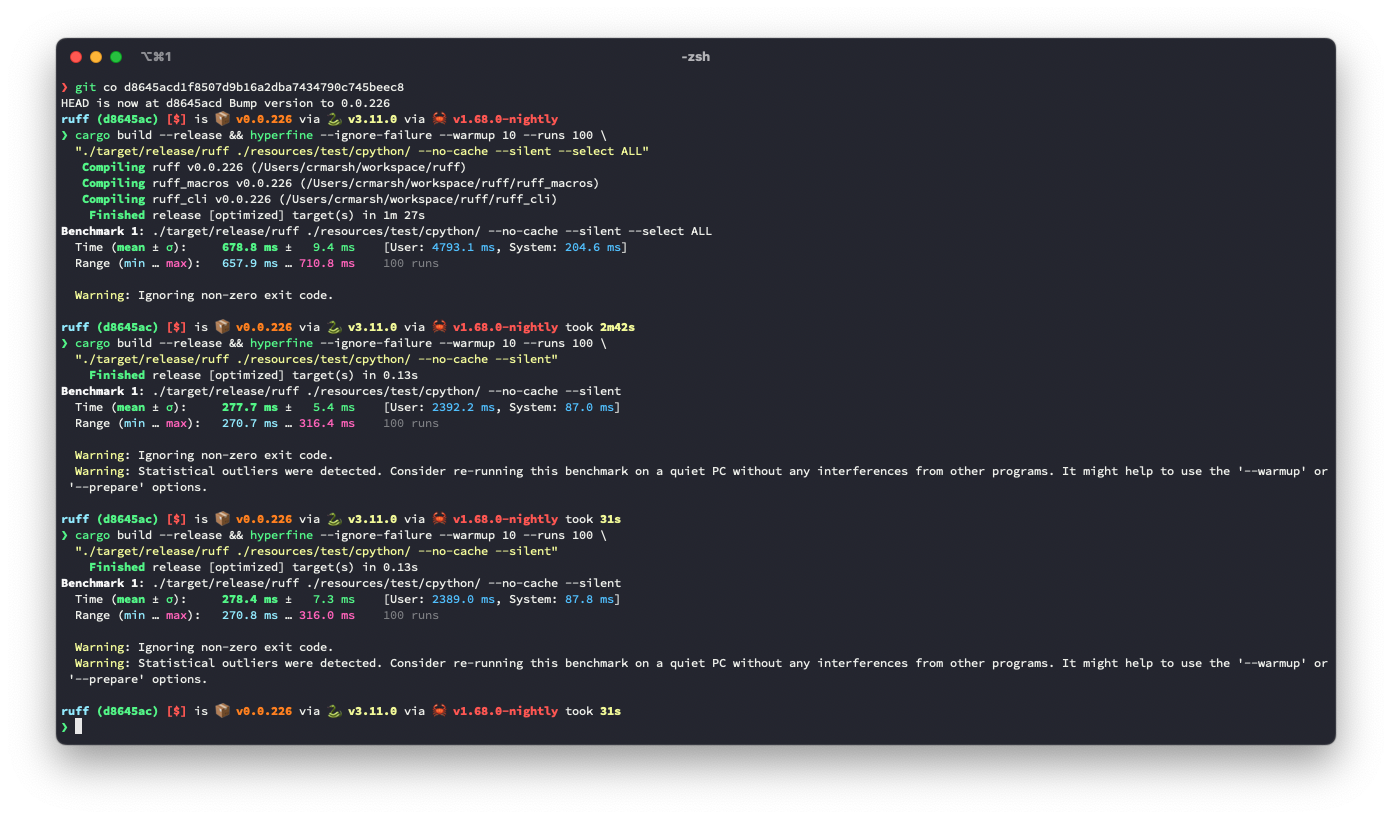

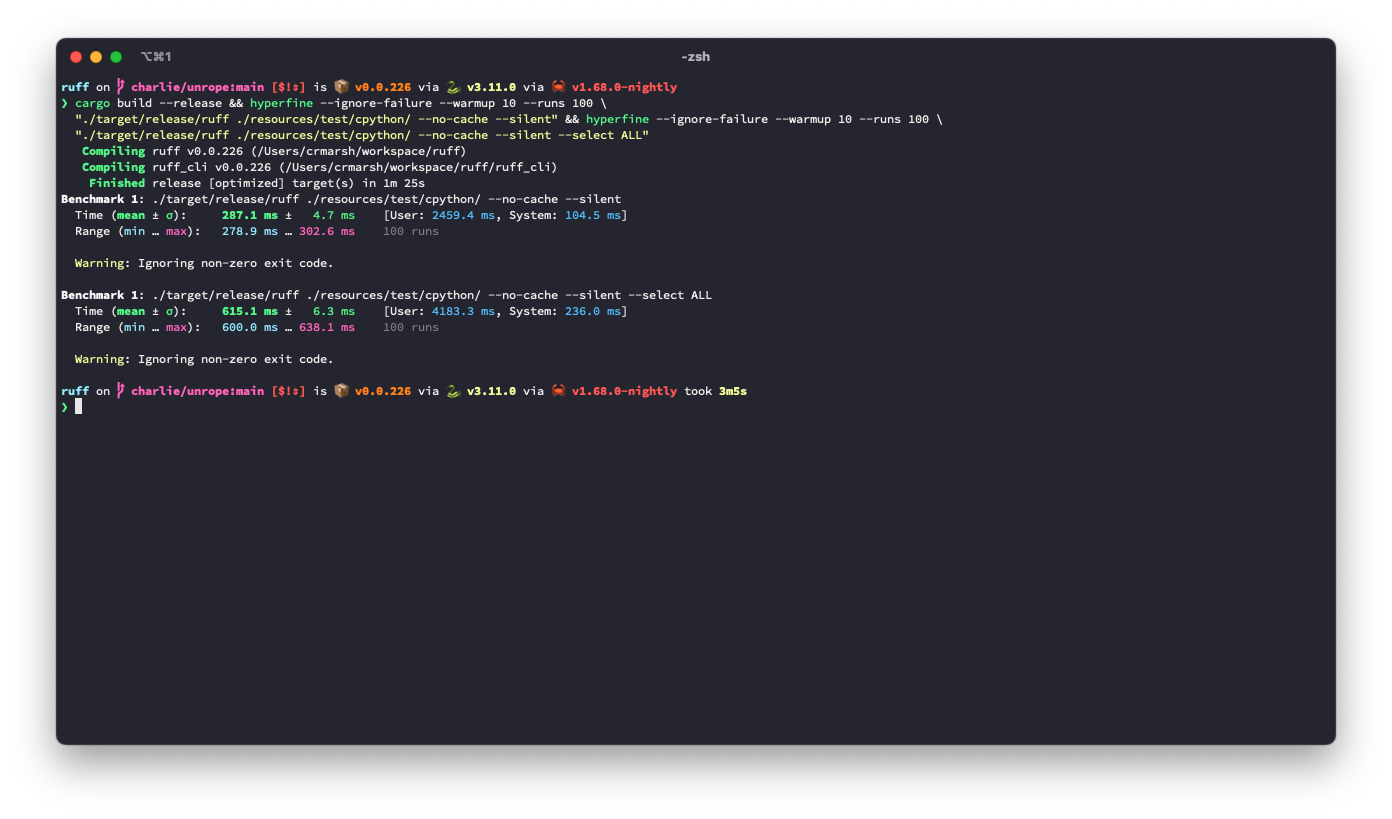

## Benchmark

It looks like this is ~3.6% slower for the default rule set, but ~9.3% faster for `--select ALL`.

**I suspect there's a strategy that would be strictly faster in both cases**, based on deferring even more computation (right now, we lazily compute these offsets, but we do it for the entire file at once, even if we only need some slice at the top), or caching the `ropey` lookups in some way.

Before:

After:

## Alternatives

I tried tweaking the `Vec::with_capacity` hints, and even trying `Vec::with_capacity(str_indices::lines_crlf::count_breaks(contents))` to do a quick scan of the number of lines, but that turned out to be slower.

Add tests.

Ensure that these cases are caught by ICN001:

```python

from xml.dom import minidom

from xml.dom.minidom import parseString

```

with config:

```toml

[tool.ruff.flake8-import-conventions.extend-aliases]

"dask.dataframe" = "dd"

"xml.dom.minidom" = "md"

"xml.dom.minidom.parseString" = "pstr"

```

This _did_ fix https://github.com/charliermarsh/ruff/issues/1894, but was a little premature. `toml` doesn't actually depend on `toml-edit` yet, and `v0.5.11` was mostly about deprecations AFAICT. So upgrading might solve that issue, but could introduce other incompatibilities, and I'd like to minimize churn. I expect that `toml` will have a new release soon, so we can revert this revert.

Reverts charliermarsh/ruff#2040.

The idea is the same as #1867. Avoids emitting `SIM102` twice for the following code:

```python

if a:

if b:

if c:

d

```

```

resources/test/fixtures/flake8_simplify/SIM102.py:1:1: SIM102 Use a single `if` statement instead of nested `if` statements

resources/test/fixtures/flake8_simplify/SIM102.py:2:5: SIM102 Use a single `if` statement instead of nested `if` statements

```

This PR adds the scaffolding files for `flake8-type-checking`, along with the simplest rule (`empty-type-checking-block`), just as an example to get us started.

See: #1785.

543865c96b introduced

RuleCode::origin() -> RuleOrigin generation via a macro, while that

signature now has been renamed to Rule::origin() -> Linter we actually

want to get rid of it since rules and linters shouldn't be this tightly

coupled (since one rule can exist in multiple linters).

Another disadvantage of the previous approach was that the prefixes

had to be defined in ruff_macros/src/prefixes.rs, which was easy to

miss when defining new linters in src/*, case in point

INP001 => violations::ImplicitNamespacePackage has in the meantime been

added without ruff_macros/src/prefixes.rs being updated accordingly

which resulted in `ruff --explain INP001` mistakenly reporting that the

rule belongs to isort (since INP001 starts with the isort prefix "I").

The derive proc macro introduced in this commit requires every variant

to have at least one #[prefix = "..."], eliminating such mistakes.

More accurate since the enum also encompasses:

* ALL (which isn't a prefix at all)

* fully-qualified rule codes (which aren't prefixes unless you say

they're a prefix to the empty string but that's not intuitive)

"origin" was accurate since ruff rules are currently always modeled

after one origin (except the Ruff-specific rules).

Since we however want to introduce a many-to-many mapping between codes

and rules, the term "origin" no longer makes much sense. Rules usually

don't have multiple origins but one linter implements a rule first and

then others implement it later (often inspired from another linter).

But we don't actually care much about where a rule originates from when

mapping multiple rule codes to one rule implementation, so renaming

RuleOrigin to Linter is less confusing with the many-to-many system.

Tracking issue: https://github.com/charliermarsh/ruff/issues/2024

Implementation for EXE003, EXE004 and EXE005 of `flake8-executable`

(shebang should contain "python", not have whitespace before, and should be on the first line)

Please take in mind that this is my first rust contribution.

The remaining EXE-rules are a combination of shebang (`lines.rs`), file permissions (`fs.rs`) and if-conditions (`ast.rs`). I was not able to find other rules that have interactions/dependencies in them. Any advice on how this can be best implemented would be very welcome.

For autofixing `EXE005`, I had in mind to _move_ the shebang line to the top op the file. This could be achieved by a combination of `Fix::insert` and `Fix::delete` (multiple fixes per diagnostic), or by implementing a dedicated `Fix::move`, or perhaps in other ways. For now I've left it out, but keen on hearing what you think would be most consistent with the package, and pointer where to start (if at all).

---

If you care about another testimonial:

`ruff` not only helps staying on top of the many excellent flake8 plugins and other Python code quality tools that are available, it also applies them at baffling speed.

(Planning to implement it soon for github.com/pandas-profiling/pandas-profiling (as largest contributor) and github.com/ing-bank/popmon.)

Rule described here: https://www.flake8rules.com/rules/E101.html

I tried to follow contributing guidelines closely, I've never worked with Rust before. Stumbled across Ruff a few days ago and would like to use it in our project, but we use a bunch of flake8 rules that are not yet implemented in ruff, so I decided to give it a go.

Following up on #2018/#2019 discussion, this moves the readme's development-related bits to `CONTRIBUTING.md` to avoid duplication, and fixes up the commands accordingly 😄

As per Cargo.toml our minimal supported Rust version is 1.65.0, so we

should be using that version in our CI for cargo test and cargo build.

This was apparently accidentally changed in

79ca66ace5.

Previous output for `ruff --explain E711`:

E711 (pycodestyle): Comparison to `None` should be `cond is None`

New output:

none-comparison

Code: E711 (pycodestyle)

Autofix is always available.

Message formats:

* Comparison to `None` should be `cond is None`

* Comparison to `None` should be `cond is not None`

For now, we're just gonna avoid flagging this for `elif` blocks, following the same reasoning as for ternaries. We can handle all of these cases, but we'll knock out the TODOs as a pair, and this avoids broken code.

Closes#2007.

As we surface rule names more to users we want

them to be easier to type than PascalCase.

Prior art:

Pylint and ESLint also use kebab-case for their rule names.

Clippy uses snake_case but only for syntactical reasons

(so that the argument to e.g. #![allow(clippy::some_lint)]

can be parsed as a path[1]).

[1]: https://doc.rust-lang.org/reference/paths.html

This PR adds a new check that turns expressions such as `[1, 2, 3] + foo` into `[1, 2, 3, *foo]`, since the latter is easier to read and faster:

```

~ $ python3.11 -m timeit -s 'b = [6, 5, 4]' '[1, 2, 3] + b'

5000000 loops, best of 5: 81.4 nsec per loop

~ $ python3.11 -m timeit -s 'b = [6, 5, 4]' '[1, 2, 3, *b]'

5000000 loops, best of 5: 66.2 nsec per loop

```

However there's a couple of gotchas:

* This felt like a `simplify` rule, so I borrowed an unused `SIM` code even if the upstream `flake8-simplify` doesn't do this transform. If it should be assigned some other code, let me know 😄

* **More importantly** this transform could be unsafe if the other operand of the `+` operation has overridden `__add__` to do something else. What's the `ruff` policy around potentially unsafe operations? (I think some of the suggestions other ported rules give could be semantically different from the original code, but I'm not sure.)

* I'm not a very established Rustacean, so there's no doubt my code isn't quite idiomatic. (For instance, is there a neater way to write that four-way `match` statement?)

Thanks for `ruff`, by the way! :)

This commit fixes a bug accidentally introduced in

6cf770a692,

which resulted every `ruff --explain <code>` invocation to fail with:

thread 'main' panicked at 'Mismatch between definition and access of `explain`.

Could not downcast to ruff::registry::Rule, need to downcast to &ruff::registry::Rule',

ruff_cli/src/cli.rs:184:18

We also add an integration test for --explain to prevent such bugs from

going by unnoticed in the future.

# This commit has been generated via the following Python script:

# (followed by `cargo +nightly fmt` and `cargo dev generate-all`)

# For the reasoning see the previous commit(s).

import re

import sys

for path in (

'src/violations.rs',

'src/rules/flake8_tidy_imports/banned_api.rs',

'src/rules/flake8_tidy_imports/relative_imports.rs',

):

with open(path) as f:

text = ''

while line := next(f, None):

if line.strip() != 'fn message(&self) -> String {':

text += line

continue

text += ' #[derive_message_formats]\n' + line

body = next(f)

while (line := next(f)) != ' }\n':

body += line

# body = re.sub(r'(?<!code\| |\.push\()format!', 'format!', body)

body = re.sub(

r'("[^"]+")\s*\.to_string\(\)', r'format!(\1)', body, re.DOTALL

)

body = re.sub(

r'(r#".+?"#)\s*\.to_string\(\)', r'format!(\1)', body, re.DOTALL

)

text += body + ' }\n'

while (line := next(f)).strip() != 'fn placeholder() -> Self {':

text += line

while (line := next(f)) != ' }\n':

pass

with open(path, 'w') as f:

f.write(text)

The idea is nice and simple we replace:

fn placeholder() -> Self;

with

fn message_formats() -> &'static [&'static str];

So e.g. if a Violation implementation defines:

fn message(&self) -> String {

format!("Local variable `{name}` is assigned to but never used")

}

it would also have to define:

fn message_formats() -> &'static [&'static str] {

&["Local variable `{name}` is assigned to but never used"]

}

Since we however obviously do not want to duplicate all of our format

strings we simply introduce a new procedural macro attribute

#[derive_message_formats] that can be added to the message method

declaration in order to automatically derive the message_formats

implementation.

This commit implements the macro. The following and final commit

updates violations.rs to use the macro. (The changes have been separated

because the next commit is autogenerated via a Python script.)

ruff_dev::generate_rules_table previously documented which rules are

autofixable via DiagnosticKind::fixable ... since the DiagnosticKind was

obtained via Rule::kind (and Violation::placeholder) which we both want

to get rid of we have to obtain the autofixability via another way.

This commit implements such another way by adding an AUTOFIX

associated constant to the Violation trait. The constant is of the type

Option<AutoFixkind>, AutofixKind is a new struct containing an

Availability enum { Sometimes, Always}, letting us additionally document

that some autofixes are only available sometimes (which previously

wasn't documented). We intentionally introduce this information in a

struct so that we can easily introduce further autofix metadata in the

future such as autofix applicability[1].

[1]: https://doc.rust-lang.org/stable/nightly-rustc/rustc_errors/enum.Applicability.html

While ruff displays the string returned by Violation::message in its

output for detected violations the messages displayed in the README

and in the `--explain <code>` output previously used the

DiagnosticKind::summary() function which for some verbose messages

provided shorter descriptions.

This commit removes DiagnosticKind::summary, and moves the more

extensive documentation into doc comments ... these are not displayed

yet to the user but doing that is very much planned.

This commit series removes the following associated

function from the Violation trait:

fn placeholder() -> Self;

ruff previously used this placeholder approach for the messages it

listed in the README and displayed when invoked with --explain <code>.

This approach is suboptimal for three reasons:

1. The placeholder implementations are completely boring code since they

just initialize the struct with some dummy values.

2. Displaying concrete error messages with arbitrary interpolated values

can be confusing for the user since they might not recognize that the

values are interpolated.

3. Some violations have varying format strings depending on the

violation which could not be documented with the previous approach

(while we could have changed the signature to return Vec<Self> this

would still very much suffer from the previous two points).

We therefore drop Violation::placeholder in favor of a new macro-based

approach, explained in commit 4/5.

Violation::placeholder is only invoked via Rule::kind, so we firstly

have to get rid of all Rule::kind invocations ... this commit starts

removing the trivial cases.

Fixes: #1953

@charliermarsh thank you for the tips in the issue.

I'm not very familiar with Rust, so please excuse if my string formatting syntax is messy.

In terms of testing, I compared output of `flake8 --format=pylint ` and `cargo run --format=pylint` on the same code and the output syntax seems to check out.

Since the UI still relies on the rule codes this improves the developer

experience by letting developers view the code of a Rule enum variant by

hovering over it.

# This commit was automatically generated by running the following

# script (followed by `cargo +nightly fmt`):

import glob

import re

from typing import NamedTuple

class Rule(NamedTuple):

code: str

name: str

path: str

def rules() -> list[Rule]:

"""Returns all the rules defined in `src/registry.rs`."""

file = open('src/registry.rs')

rules = []

while next(file) != 'ruff_macros::define_rule_mapping!(\n':

continue

while (line := next(file)) != ');\n':

line = line.strip().rstrip(',')

if line.startswith('//'):

continue

code, path = line.split(' => ')

name = path.rsplit('::')[-1]

rules.append(Rule(code, name, path))

return rules

code2name = {r.code: r.name for r in rules()}

for pattern in ('src/**/*.rs', 'ruff_cli/**/*.rs', 'ruff_dev/**/*.rs', 'scripts/add_*.py'):

for name in glob.glob(pattern, recursive=True):

with open(name) as f:

text = f.read()

text = re.sub('Rule(?:Code)?::([A-Z]\w+)', lambda m: 'Rule::' + code2name[m.group(1)], text)

text = re.sub(r'(?<!"<FilePattern>:<)RuleCode\b', 'Rule', text)

text = re.sub('(use crate::registry::{.*, Rule), Rule(.*)', r'\1\2', text) # fix duplicate import

with open(name, 'w') as f:

f.write(text)

This commit series refactors ruff to decouple "rules" from "rule codes",

in order to:

1. Make our code more readable by changing e.g.

RuleCode::UP004 to Rule::UselessObjectInheritance.

2. Let us cleanly map multiple codes to one rule, for example:

[UP004] in pyupgrade, [R0205] in pylint and [PIE792] in flake8-pie

all refer to the rule UselessObjectInheritance but ruff currently

only associates that rule with the UP004 code (since the

implementation was initially modeled after pyupgrade).

3. Let us cleanly map one code to multiple rules, for example:

[C0103] from pylint encompasses N801, N802 and N803 from pep8-naming.

The latter two steps are not yet implemented by this commit series

but this refactoring enables us to introduce such a mapping. Such a

mapping would also let us expand flake8_to_ruff to support e.g. pylint.

After the next commit which just does some renaming the following four

commits remove all trait derivations from the Rule (previously RuleCode)

enum that depend on the variant names to guarantee that they are not

used anywhere anymore so that we can rename all of these variants in the

eigth and final commit without breaking anything.

While the plan very much is to also surface these human-friendly names

more in the user interface this is not yet done in this commit series,

which does not change anything about the UI: it's purely a refactor.

[UP004]: pyupgrade doesn't actually assign codes to its messages.

[R0205]: https://pylint.pycqa.org/en/latest/user_guide/messages/refactor/useless-object-inheritance.html

[PIE792]: https://github.com/sbdchd/flake8-pie#pie792-no-inherit-object

[C0103]: https://pylint.pycqa.org/en/latest/user_guide/messages/convention/invalid-name.html

This is slightly buggy due to Instagram/LibCST#855; it will complain `[ERROR] Failed to fix nested with: Failed to extract CST from source` when trying to fix nested parenthesized `with` statements lacking trailing commas. But presumably people who write parenthesized `with` statements already knew that they don’t need to nest them.

Signed-off-by: Anders Kaseorg <andersk@mit.edu>

Some files were not shown because too many files have changed in this diff

Show More

Reference in New Issue

Block a user

Blocking a user prevents them from interacting with repositories, such as opening or commenting on pull requests or issues. Learn more about blocking a user.